Pinpointing the Latency Bottlenecks

Understanding where latency comes from and how it impacts users at scale is the first step to optimizing performance in global applications.

The Real World Impact of Latency in 2026

In today’s hyper connected world, even small increases in response time can affect user engagement, conversion rates, and overall trust in a platform. With users accessing services from a wider range of device types, networks, and geographies, application responsiveness has never been more critical.

Key risks of unmanaged latency:

Increased bounce rates for content heavy or interactive apps

Revenue loss in e commerce from slow cart or checkout flows

Reduced user satisfaction in collaboration, gaming, and live communication tools

User Perceived vs. Network Latency

Latency isn’t just a technical metric it’s also a user experience issue. It’s important to distinguish between what the system measures and what the user feels.

Understanding the gap:

Network Latency: The time it takes for data to travel between client and server

User Perceived Latency: The total delay users experience, which includes rendering time, input delay, and server processing

Design and engineering teams must align on both forms of latency to prioritize meaningful improvements.

Common Performance Traps in Global Scale Systems

As systems scale across regions and business demands grow, several recurring issues begin to surface:

Pitfalls to watch out for:

Over centralized infrastructure: Hosting all services in a single region increases latency for remote users

Poorly placed APIs: Bottlenecks arise when core APIs are not mirrored across regions

Unoptimized DNS and routing: Suboptimal paths can introduce unnecessary delays

Heavy payload transfers: Sending large, uncompressed data objects from server to client without optimization

Proactively identifying these issues through observability tooling and architecture reviews can prevent performance degradation before it spreads globally.

Geographic Distribution of Infrastructure

Latency adds up fast when your users are scattered and your servers are not. Deploying edge locations and regional clusters is the first real defense. You’re bringing compute and data closer to end users not just in theory, but in milliseconds. Whether you’re running real time apps or API heavy backends, minimizing that physical and network distance matters.

Content Delivery Networks (CDNs) still do what they’ve always done push static files closer to the user but in 2026, they pull double duty. Modern CDNs handle dynamic routing, edge functions, and localized caching, giving global apps a serious boost. Think less lag, more snap.

Data strategy matters here too. Rule of thumb: replicate when read access is frequent and latency sensitive; centralize when consistency is critical or write heavy. But even that’s evolving with smarter database platforms and conflict resolution models. The line isn’t as sharp as it used to be.

Intelligent routing is the glue. Real time geolocation data lets your infrastructure steer user requests to the optimal server based not just on geography, but also server health, time of day, or network congestion. It’s a moving target, but route right, and you cut down lag before the user even notices it was a risk.

Smarter Use of Caching

When you’re tuning for latency at a global scale, effective caching isn’t optional it’s foundational. Front end caching starts with the browser. It stores static assets, API responses, and even full pages locally, cutting out round trips entirely for repeated views. Then there are content delivery networks (CDNs) that sit between your user and your origin server, caching assets geographically closer to the user. CDNs aren’t just for images anymore they’re now caching entire edge rendered pages, dynamic content fragments, and API responses.

On the server side, distributed caches like Redis or Memcached are essential when milliseconds count. They serve hot data from RAM instead of slow disk or database queries. Layering cache tiers browser, CDN, app level, and data layer can double or triple perceived performance. Database replicas in read heavy architectures help offload the master node, keeping things snappy without compromising consistency at least, when properly configured.

But caching has a dark side: staleness and inconsistency. Serving outdated data damages user trust. That’s why TTL (time to live) strategies matter short TTLs keep data fresh but increase backend load; long TTLs reduce calls but risk staleness. Smart configurations might use layered TTLs or conditional invalidation to multitask between freshness and speed. Even better are cache busting techniques triggered by data change events, not by clock ticks.

Bottom line: caching reduces latency, but you’ve got to design for nuance, not just speed. Blend short term responsiveness with long term reliability, and global users won’t just tolerate your app they’ll stick around.

Protocol and Transport Layer Upgrades

If you’re building apps for users across continents, transport protocols matter. A lot. HTTP/3 and QUIC are leading the charge in cutting response times, especially in geographies where latency is baked into the internet cake think remote APAC regions or parts of Africa. The combo ditches the old TCP handshake in favor of UDP, cutting connection setup time and allowing for multiplexing without the head of line blocking headache. Bottom line? Faster page loads, smoother streams, and less churn from frustrated users.

By 2026, the line between use cases for TCP and UDP is getting blurrier. TCP still rules in legacy systems and high reliability data transfers, but UDP especially when paired with QUIC is winning ground in real time apps, mobile first markets, and edge architectures where snappy performance is non negotiable. Expect more streaming platforms, multiplayer games, and live collaboration tools to jump ship from TCP.

Compression used to be a tradeoff: smaller payloads, but more CPU time. Not anymore. With modern compression algorithms like Brotli and Zstandard, we get size reduction without hammering processing speed. The trick is tuning the compression level based on device type and connection quality. Smarter servers can now auto adjust encoding rules, trimming fat without adding lag.

In short, 2026 demands that developers build at the protocol level with latency in mind because no one’s waiting around for your app to catch up.

Application Level Optimization Tactics

Optimizing at the application layer isn’t flashy but it’s where latency can live or die. First up: lazy loading. If users don’t need everything at once, don’t deliver everything at once. Break your payloads into parts. That’s bandwidth you don’t waste, and milliseconds you save. Prefetching is the flip side predict what the user might do next and get it ready in the background. When done right, it feels like magic. When overdone, it’s waste. So be sharp with it. Connection coalescing is less talked about, but it’s a quiet powerhouse. Reuse those TCP connections. Avoid blowing up the networking cost with unnecessary handshakes.

As for fetching data, asynchronous is the law, not the luxury. Fetch in parallel, defer what’s not critical, stream results when possible. Blocking is the enemy.

Too many apps burn time on round trips. That’s a design issue. Smarter APIs are the fix: bundle what makes sense, avoid endless calls for trivial data, and plan for worst case latency upfront not as an afterthought. REST is serviceable, but in thorny latency spots, GraphQL or gRPC gives you a better edge. GraphQL slices precisely what you need; gRPC slashes payload size and speeds things up. It’s not about fad it’s about fit.

Great performance isn’t an afterpatch. It’s baked in. And often, built at this level.

Infrastructure Cost vs. Performance: Smart Tradeoffs

Not every region offers the perfect balance of low latency and low costs. Some locations especially in South Asia, parts of Africa, or Eastern Europe come with higher round trip times, but much lower hosting or compute prices. For many teams, that tradeoff is worth it if they can reinforce performance smartly elsewhere.

The key is selective investment. You don’t need hyperspeed in every region, just the ones with active users. Use real time traffic data to decide where edge computing makes sense autoscaling nodes only when incoming load justifies the boost. No need to keep west coast U.S. traffic blazing fast if your customers are waking up in Jakarta.

When paired with smart routing and intelligent caching, strategic deployment in high latency/low cost zones can slash cloud bills without wrecking UX. But it takes discipline: tight observability, precise thresholds for triggering autoscaling, and de provisioning once traffic drops. Don’t over engineer your global stack engineer for where your users are.

For more on blending cost containment with elastic scaling, check out Lowering Cloud Bill Without Sacrificing Scalability.

Monitoring and Continuous Tuning

When it comes to global applications, what you don’t measure will slow you down. Real time performance analytics especially those powered by distributed tracing are no longer optional. They let teams see how requests move through services, where they hang, and what part of the stack needs work. It’s not just about knowing something’s slow. It’s about knowing exactly why.

Synthetic global testing takes that one step further by simulating worst case latency across regions. Want to know how your checkout flow feels from rural Australia or a congested Wi Fi network in Lagos? You can. Testing under controlled conditions means you don’t wait for real users to feel the pain.

Then there’s A/B testing usually thought of in product or UI contexts, but just as vital for performance improvements. Maybe your new asset pipeline loads 10% faster but do users stay longer, convert more, or bounce the same? Without hard data, it’s all guesswork. The serious teams are turning latency optimization into a metrics driven discipline: test, measure, iterate.

Speed is a feature and features should ship like everything else: with data to back them.

Looking Ahead

The future of latency optimization isn’t just about shaving milliseconds it’s about changing the rules entirely. By 2026, the rapid rollout of 5G and the maturation of satellite internet are closing the gap between remote users and core infrastructure. These technologies won’t fix everything overnight, but they’re carving off big chunks of network lag, especially in underserved regions. For global applications, that means fewer blind spots and more consistent experiences across continents.

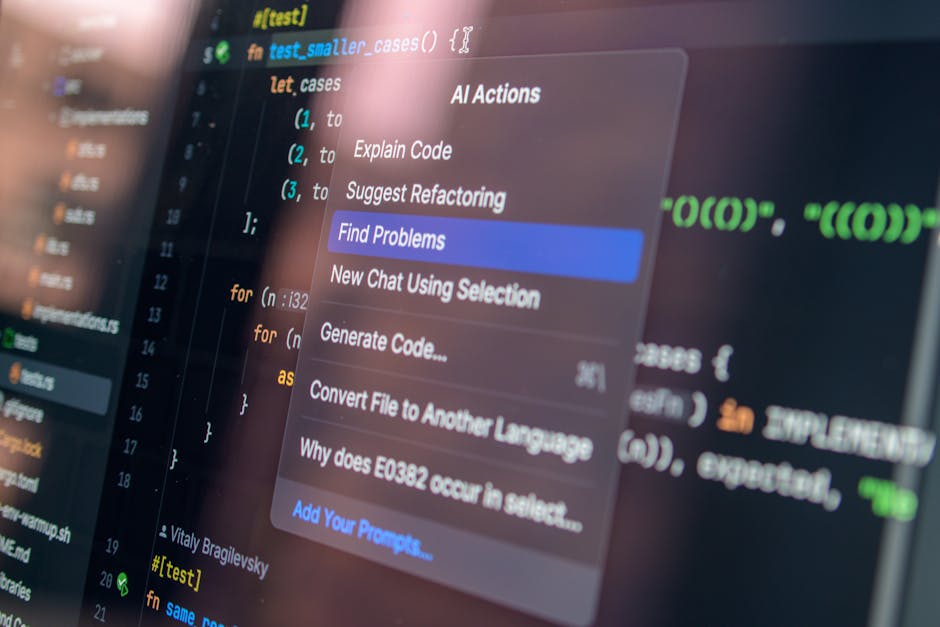

AI is stepping in too quietly routing traffic away from bottlenecks in real time and auto invalidating caches where stale data could hurt performance. No more static rules or manual tweaks. AI assisted systems are becoming default, scanning patterns and rerouting with precision before humans even notice a lag spike.

On the dev side, the line between delivery and optimization is blurring. Continuous delivery pipelines are integrating performance benchmarks. Code is not just pushed it’s monitored, analyzed, and optimized at the edge. Just in time latency tuning becomes the new build step.

It’s not a magic fix. But for teams aiming to run fast and global, these shifts are more than hype. They’re load bearing.