The Shift Toward Smarter Machines

Robots in 2026 don’t just execute pre written commands they evolve. Instead of relying on rigid scripts, they observe, act, fail, and adjust. At the heart of this evolution is reinforcement learning (RL), a method that flips conventional programming on its head. Rather than being told what to do step by step, RL powered robots figure things out for themselves, guided by feedback loops and goals.

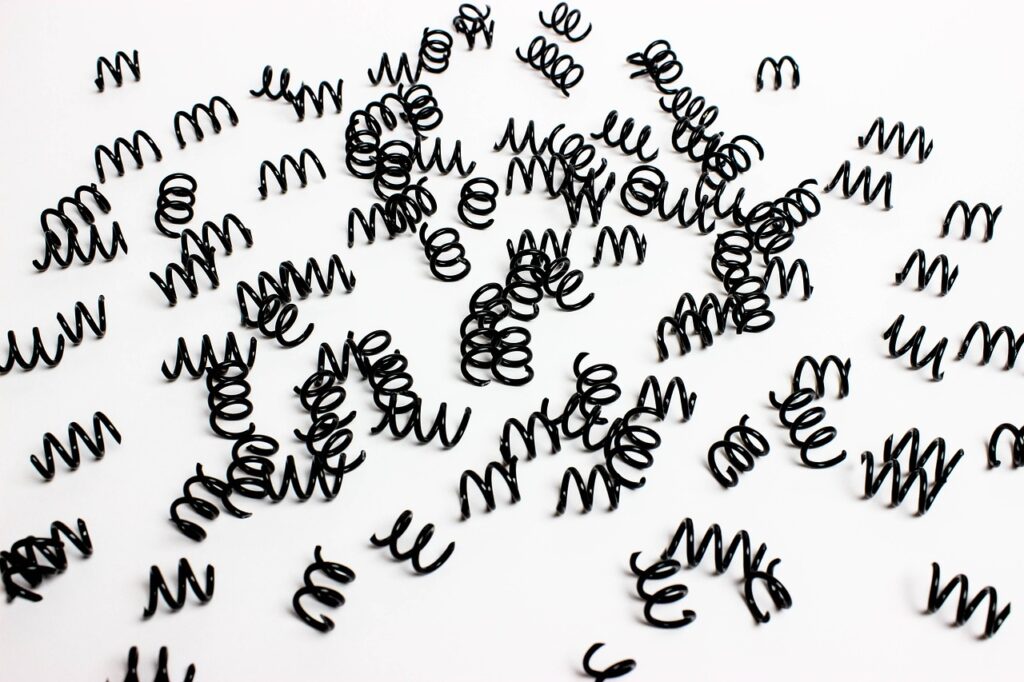

Trial and error is no longer a bug it’s the feature. Robots try a range of actions, get scored on how well they perform, and then tweak their behavior to get better results next time. This learning loop mirrors the way living organisms adapt, and it’s making machines smarter, faster, and more flexible. As a result, instead of building new code for every use case, developers are training robots to teach themselves how to solve problems we didn’t fully anticipate.

The payoff? Real world resilience. From messy warehouses to dynamic home environments, RL is turning robots from rigid assistants into adaptable teammates.

Core Concepts Behind RL in Robotics

At the core of reinforcement learning (RL) are four key elements: the agent, the environment, the reward, and the policy. The agent is the robot, the environment is the world it operates in, the reward is the signal that tells it how well it’s doing, and the policy is its decision making strategy. Simple on paper but this tight loop is what’s teaching machines to think in action.

Here’s how it works: the robot (agent) takes an action in its environment. The environment reacts and sends back a reward. The agent uses that reward to update its policy and make better decisions next time. This feedback loop keeps spinning, helping the robot improve over time without needing every step hard coded by humans.

Early RL systems learned in toy simulations basic mazes, object stacking, or grid worlds. But things have leveled up fast. Now, RL is fueling robots to adapt in real world conditions: navigating ground clutter, adjusting grip strength mid task, or choosing movement patterns on new terrain. It’s the grind of repetition and feedback done at machine scale that’s pushing robotics into smarter territory.

Real World Applications Taking Off

Reinforcement Learning (RL) isn’t stuck in the lab anymore. It’s out in the wild, and it’s already reshaping how robots operate across critical industries.

Automated Warehouses

Warehouses have become pressure cookers of speed and efficiency. RL helps robots move smarter not just faster. Instead of blindly following pre set routes, these bots learn optimal paths over time. They avoid collisions, adapt to traffic patterns, and dynamically re route in cluttered environments. It’s not just safer it’s more productive.

Assistive Robots

In hospitals, homes, and public spaces, robots are getting better at reading the room. RL teaches them to interpret and react to human behavior in real time. Whether it’s passing medication to a patient or following someone through a busy hallway, assistive machines are learning through trial and reinforcement. As a result, their interactions feel less robotic and a lot more intuitive.

Search and Rescue

Disaster zones are unpredictable. That’s where RL shows real nerve. Robots learn to traverse chaotic terrain uneven ground, debris, shifting obstacles. Pre programmed routes won’t cut it here. What works one minute might fail the next, and RL enables robots to adjust on the fly. These are machines that don’t freeze when the map runs out.

In short: Reinforcement learning lets robots step up in real world scenarios not by being told what to do but by discovering what works.

Key Technologies Driving Progress

Reinforcement Learning (RL) advances robotics not just through smarter decision making, but by teaming up with other powerful technologies.

Start with vision. RL combined with real time computer vision gives robots dynamic perception. Instead of passively tracking objects, machines can now recognize, evaluate, and take action whether manipulating fragile materials on a factory floor or sorting packages on the fly. This tight RL vision synergy makes impromptu problem solving possible.

But training these models in the real world? Risky, slow, expensive. That’s why simulation platforms like MuJoCo and PyBullet matter. They offer physics rich, customizable environments where bots can fail fast and learn faster without breaking hardware. Developers test edge cases, overhaul parameters, and push models to limits before ever touching physical systems.

None of this would move quickly without muscle under the hood. High performance computing clusters and cloud based pipelines give teams the firepower to run massive reinforcement cycles, crunch tons of data, and iterate in near real time. You’re not waiting days to test a policy update you’re pushing it live before lunch.

If you’re a developer looking to jump in, it starts with the right tools. Here are some top Python libraries for building machine learning models—a solid gateway into the world of RL in robotics. Learn the tools, build the muscle, then let your bots learn the rest.

Challenges Still on the Table

Reinforcement learning has made big promises in robotics, but reality still bites in some key areas. First up: sample inefficiency. RL systems typically need a ridiculous number of training cycles to get useful. It’s like teaching a robot to walk by letting it fall over ten thousand times. This isn’t just slow it’s expensive when physical hardware is involved.

Then comes the real world risk. A robot exploring through trial and error sounds cool until it accidentally knocks over someone’s coffee maker or worse. Safety isn’t optional in physical environments. This is why most RL training happens in simulation first. Even so, what works in sim doesn’t always transfer cleanly. That’s the infamous “sim2real” gap. Variables like friction, lighting, and unpredictable human behavior throw trained models for a loop.

The bottom line: we’re making progress, but these challenges still block the path to true autonomy. Smarter sampling methods, safer testing protocols, and better sim realism all of it needs to improve before robots truly learn like living things.

The Road Ahead

The next wave of robotics won’t be specialized arms bolted to factory floors. It’ll be multipurpose machines capable of learning on their own generalist robots that adapt as situations change. One bot, many jobs. That’s the direction we’re headed, and reinforcement learning (RL) is laying the groundwork.

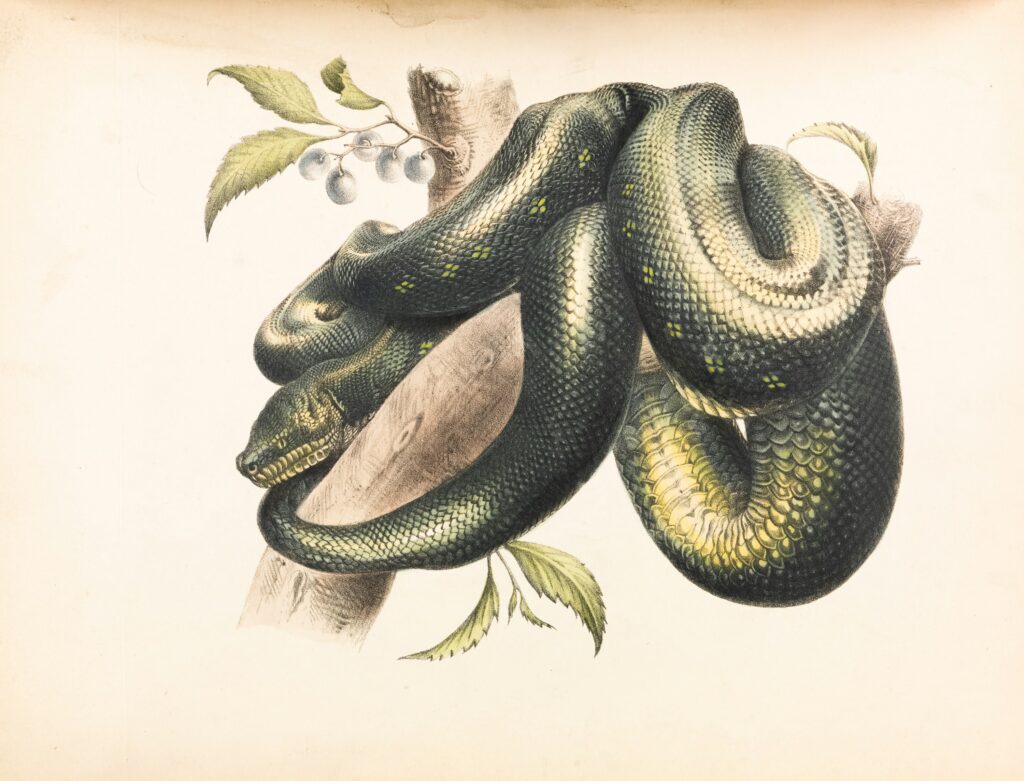

What’s pushing this forward isn’t just better code. It’s cross pollination. RL is now tapping into insights from neuroscience and cognitive science to make robots more intuitive, more responsive, and more human friendly. Researchers are asking: how do living beings learn? Then they’re building systems that mimic that.

We’re moving from rigid workflows to machines that improve through experience. This isn’t automation it’s autonomy. Robots that don’t just follow instructions, but figure out the best ones. As this field matures, creators and engineers will need to think less like programmers and more like teachers. Training a robot becomes less about scripting every detail, more about shaping an environment that encourages learning.