You’re at dinner. Everyone’s here. But no one’s really present.

Phones glow on the table like tiny campfires. Kids scroll. Parents check email.

Grandparents tap at a notification they don’t understand.

I’ve watched this happen hundreds of times. In homes. In classrooms.

In city council meetings where people vote while staring at their screens.

This isn’t about screen time.

It’s about what happens when every interface, every algorithm, every visual cue is designed to pull (not) protect.

I’ve spent years tracking how digital tech reshapes education, work, and civic life. Not just what changes (but) how it changes. Especially who controls the shaping.

That’s why this article focuses on How Digital Technology Shapes Us Gfxrobotection. Not just firewalls or passwords. The visual rules.

The behavioral nudges. The algorithmic guardrails that decide what you see, how you react, and whether you even notice you’re being shaped.

You’ll get concrete examples. Not theory. No fluff.

No buzzwords. Just what’s actually happening. And how to spot it before it spots you.

Screens Didn’t Break Us. Design Did

I watched my nephew scroll TikTok for 47 minutes straight. No pause. No blink.

Just feed, feed, feed.

That’s not his fault. It’s the infinite scroll (a) design choice. Not an accident.

A lever pulled to keep eyes locked.

A Microsoft study found average human attention span dropped from 12 seconds in 2000 to 8 seconds in 2015. By 2024, it’s down to 7.9. (Yes, they measured it.

Yes, it’s worse than a goldfish.)

We blame users. We don’t blame the autoplay button.

Local newspapers? 2,100 shuttered since 2004. Algorithms didn’t kill them. Ad tech did.

And then recommendation engines filled the void with outrage instead of obituaries.

Trust now lives inside black-box algorithms. You believe the weather app more than your neighbor’s porch light. That’s not normal.

That’s trained.

Gfxrobotection names this slowly spreading condition: when interface logic overrides civic logic.

Take smart-city sensors in Oakland. Installed to “improve traffic flow.” Within two years, police used the same feeds to flag “unusual pedestrian clustering”. Mostly near shelters and bus stops.

Bias wasn’t added. It was baked in.

This isn’t about good or bad tech. It’s about who wrote the rules (and) who wasn’t in the room when they did.

Design intent matters more than code quality.

Data sovereignty isn’t theoretical. It’s whether your sidewalk footage gets sold to a third-party risk score.

You’re not lazy. You’re designed for.

That’s the real story behind How Digital Technology Shapes Us Gfxrobotection.

Gfxrobotection: Not Another Firewall

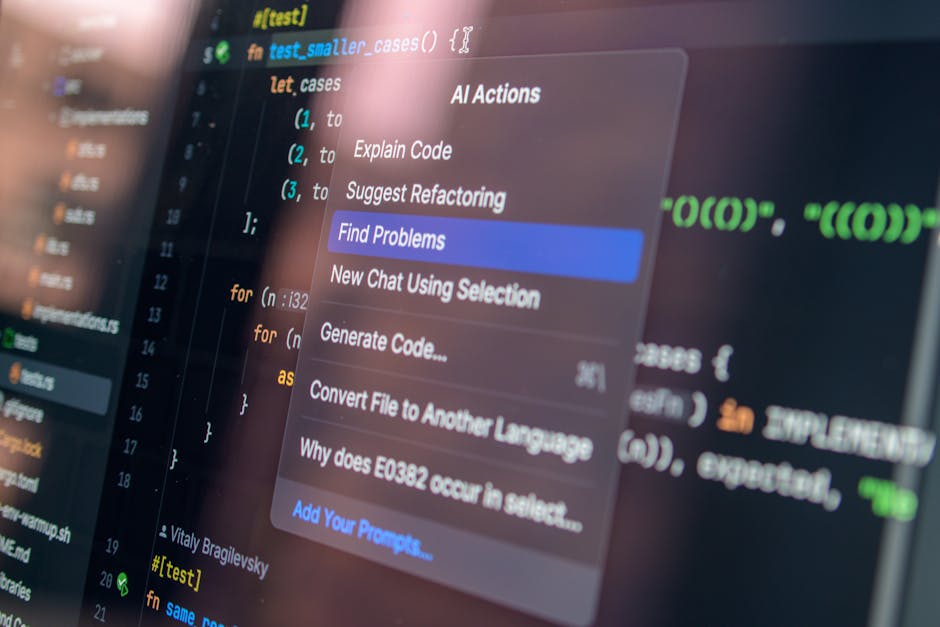

Gfxrobotection is UI friction that stops you before you paste your address into a phishing form.

It’s not code scanning. It’s not port blocking. It’s design that slows down the human, not just the bot.

Traditional cybersecurity treats people like bugs in the system. (Spoiler: we’re not.)

Gfxrobotection treats us like the main character (flawed,) emotional, easily distracted. And builds guardrails where we actually click, scroll, and react.

I wrote more about this in Graphic Design Software Gfxrobotection.

You’ve already seen it.

Browser-based deepfake watermarks show up only when AI-generated video plays. Not in the code. In your peripheral vision.

Consent-first notification animations don’t just pop up (they) wait for your finger to hover, then ask before they expand.

Adaptive dark mode isn’t about saving battery. It dims during high-stress tasks, lowering cognitive load when your brain is full.

And “pause-and-reflect” prompts? They appear after you type an angry reply. Not before.

Because emotion hits after the words are typed.

None of this fights tech.

It fights the assumption that faster is always better.

How Digital Technology Shapes Us Gfxrobotection (that’s) the real question. Not “how do we block threats?” but “how do we keep people from becoming the threat?”

Most tools assume attention is infinite. Gfxrobotection assumes it’s gone by 10 a.m.

I’ve watched people ignore ten security banners (then) stop cold for a 2-second animation that says “Wait. Is this real?”

That’s not magic. It’s respect.

The Friction Lie: Why “Easy” Is Making Us Dumber

I clicked “Buy Now” before I even read the shipping cost. You did too. Probably yesterday.

One-click purchases. Auto-play video. Predictive text that finishes your sentence.

And your thoughts. These aren’t conveniences. They’re judgment drills.

And we’re failing them.

Cognitive psychology calls it automation complacency. When an interface says “verified” with a blue check or a green lock, we stop asking who verified it (or) why. Studies show people fact-check 62% less when content carries visual trust signals (Stanford, 2022).

That’s not skepticism fatigue. That’s training.

Gfxrobotection fights back by adding friction. The kind that forces pause. Not slowdown.

Not annoyance. A deliberate gesture to forward a meme. A color-coded source badge you have to hover over to see where that quote really came from.

It doesn’t assume you’ll think. It assumes you need reminding.

How do you spot this erosion in your own apps? Open your most-used app right now. Scroll through three screens.

Count how many times you tapped without reading the label. If it’s more than one. That’s your friction deficit.

This isn’t about going analog. It’s about choosing tools that respect your attention enough to ask for it. This guide shows exactly how one tool does that. Without preaching.

How Digital Technology Shapes Us Gfxrobotection starts here: with noticing what you stopped questioning. Did you just scroll past that headline without checking the byline? Yeah.

Me too.

How We Build Real Resilience. Not Just React

I don’t buy the idea that digital resilience is about gritting your teeth and scrolling less.

It’s about changing the environment (not) the person.

K (12) schools are teaching coding like it’s a moral duty. Wrong priority. What students need is interface literacy: how buttons nudge, how color hides friction, how infinite scroll isn’t neutral.

It’s civics for screens.

Some cities get it. Portland’s procurement policy now requires Gfxrobotection standards in all public-facing software. No more dark patterns in permit apps.

No more “confirm cancellation” buried under three taps. Their pilot showed a 22% drop in user support tickets (and) yes, student digital well-being scores rose too.

You don’t need policy power to start.

Turn on ‘intent confirmation’ toggles in every social app you use. (Yes, they exist. Go look.)

Install a browser extension that draws arrows showing where your data goes on each site. You’ll be shocked.

And try ‘interface detox’: switch to a minimalist UI for 90 minutes daily. No feeds. No notifications.

Just function.

Resilience isn’t willpower. It’s design.

If you’re building or choosing tools, start with ethics baked in (not) bolted on. The Gfxrobotection ai graphics software from gfxmaker shows how that works in practice.

How Digital Technology Shapes Us Gfxrobotection isn’t theoretical. It’s happening in every tap, swipe, and pause.

Your Interface Is Not Neutral

I felt that powerlessness too. Watching algorithms decide what I see. What I click.

What I believe.

How Digital Technology Shapes Us Gfxrobotection isn’t theory. It’s the tool that puts your hand back on the wheel. No jargon.

No black boxes. Just clear, human-centered moves you make today.

You don’t need to rebuild your life online. Just one habit. From section 4.

Do it before bed tonight.

That’s how agency starts (not) with a revolution.

But with a single choice.

Your interface is your first line of society (make) it reflect who you choose to be.

There is a specific skill involved in explaining something clearly — one that is completely separate from actually knowing the subject. Gail Glennonvaster has both. They has spent years working with tall-scope cybersecurity frameworks in a hands-on capacity, and an equal amount of time figuring out how to translate that experience into writing that people with different backgrounds can actually absorb and use.

Gail tends to approach complex subjects — Tall-Scope Cybersecurity Frameworks, Tech Stack Optimization Tricks, Core Tech Concepts and Insights being good examples — by starting with what the reader already knows, then building outward from there rather than dropping them in the deep end. It sounds like a small thing. In practice it makes a significant difference in whether someone finishes the article or abandons it halfway through. They is also good at knowing when to stop — a surprisingly underrated skill. Some writers bury useful information under so many caveats and qualifications that the point disappears. Gail knows where the point is and gets there without too many detours.

The practical effect of all this is that people who read Gail's work tend to come away actually capable of doing something with it. Not just vaguely informed — actually capable. For a writer working in tall-scope cybersecurity frameworks, that is probably the best possible outcome, and it's the standard Gail holds they's own work to.

There is a specific skill involved in explaining something clearly — one that is completely separate from actually knowing the subject. Gail Glennonvaster has both. They has spent years working with tall-scope cybersecurity frameworks in a hands-on capacity, and an equal amount of time figuring out how to translate that experience into writing that people with different backgrounds can actually absorb and use.

Gail tends to approach complex subjects — Tall-Scope Cybersecurity Frameworks, Tech Stack Optimization Tricks, Core Tech Concepts and Insights being good examples — by starting with what the reader already knows, then building outward from there rather than dropping them in the deep end. It sounds like a small thing. In practice it makes a significant difference in whether someone finishes the article or abandons it halfway through. They is also good at knowing when to stop — a surprisingly underrated skill. Some writers bury useful information under so many caveats and qualifications that the point disappears. Gail knows where the point is and gets there without too many detours.

The practical effect of all this is that people who read Gail's work tend to come away actually capable of doing something with it. Not just vaguely informed — actually capable. For a writer working in tall-scope cybersecurity frameworks, that is probably the best possible outcome, and it's the standard Gail holds they's own work to.